Upgrade from 8.1.0.1 to 8.1.0.2 & 8.1.0.2.1

1. Upgrade From 8.1.0.1 to 8.1.0.2/8.1.0.2.1(Selected Services)

RDAF Platform: From 8.1.0.1 to 8.1.0.2 (Selected Services)

AIOps (OIA) Application: From 8.1.0.1 to 8.1.0.2, 8.1.0.2.1 (Selected Services)

RDAF Platform: From 8.1.0.1 to 8.1.0.2 (Selected Services)

OIA (AIOps) Application: From 8.1.0.1 to 8.1.0.2, 8.1.0.2.1 (Selected Services)

1.1. Prerequisites

Before proceeding with this upgrade, please make sure and verify the below prerequisites are met.

Currently deployed CLI and RDAF services are running the below versions.

-

RDAF Deployment CLI version: 1.4.1

-

Infra Services tag: 1.0.4

-

Platform Services and RDA Worker tag: 8.1.0.1

-

OIA Application Services tag: 8.1.0.1

-

CloudFabrix recommends taking VMware VM snapshots where RDA Fabric infra/platform/applications are deployed

Note

- Check the Disk space of all the Platform and Service Vm's using the below mentioned command, the highlighted disk size should be less than 80%

rdauser@oia-125-216:~/collab-3.7-upgrade$ df -kh

Filesystem Size Used Avail Use% Mounted on

udev 32G 0 32G 0% /dev

tmpfs 6.3G 357M 6.0G 6% /run

/dev/mapper/ubuntu--vg-ubuntu--lv 48G 12G 34G 26% /

tmpfs 32G 0 32G 0% /dev/shm

tmpfs 5.0M 0 5.0M 0% /run/lock

tmpfs 32G 0 32G 0% /sys/fs/cgroup

/dev/loop0 64M 64M 0 100% /snap/core20/2318

/dev/loop2 92M 92M 0 100% /snap/lxd/24061

/dev/sda2 1.5G 309M 1.1G 23% /boot

/dev/sdf 50G 3.8G 47G 8% /var/mysql

/dev/loop3 39M 39M 0 100% /snap/snapd/21759

/dev/sdg 50G 541M 50G 2% /192.168-data

/dev/loop4 92M 92M 0 100% /snap/lxd/29619

/dev/loop5 39M 39M 0 100% /snap/snapd/21465

/dev/sde 15G 140M 15G 1% /zookeeper

/dev/sdd 30G 884M 30G 3% /kafka-logs

/dev/sdc 50G 3.3G 47G 7% /opt

/dev/sdb 50G 29G 22G 57% /var/lib/docker

/dev/sdi 25G 294M 25G 2% /graphdb

/dev/sdh 50G 34G 17G 68% /opensearch

/dev/loop6 64M 64M 0 100% /snap/core20/2379

- Check all MariaDB nodes are sync on HA setup using below commands before start upgrade

Tip

Please run the below commands on the VM host where RDAF deployment CLI was installed and rdafk8s setup command was run. The mariadb configuration is read from /opt/rdaf/rdaf.cfg file.

MARIADB_HOST=`cat /opt/rdaf/rdaf.cfg | grep -A3 haproxy| grep advertised_external_host | awk '{print $3}'`

MARIADB_USER=`cat /opt/rdaf/rdaf.cfg | grep -A3 mariadb | grep user | awk '{print $3}' | base64 -d`

MARIADB_PASSWORD=`cat /opt/rdaf/rdaf.cfg | grep -A3 mariadb | grep password | awk '{print $3}' | base64 -d`

mysql -u$MARIADB_USER -p$MARIADB_PASSWORD -h $MARIADB_HOST -P3307 -e "show status like 'wsrep_local_state_comment';"

Please verify that the mariadb cluster state is in Synced state.

+---------------------------+--------+

| Variable_name | Value |

+---------------------------+--------+

| wsrep_local_state_comment | Synced |

+---------------------------+--------+

Please run the below command and verify that the mariadb cluster size is 3.

mysql -u$MARIADB_USER -p$MARIADB_PASSWORD -h $MARIADB_HOST -P3307 -e "SHOW GLOBAL STATUS LIKE 'wsrep_cluster_size'";

+--------------------+-------+

| Variable_name | Value |

+--------------------+-------+

| wsrep_cluster_size | 3 |

+--------------------+-------+

Warning

Make sure all of the above pre-requisites are met before proceeding with the upgrade process.

Warning

Kubernetes: Though Kubernetes based RDA Fabric deployment supports zero downtime upgrade, it is recommended to schedule a maintenance window for upgrading RDAF Platform and AIOps services to newer version.

Important

Please make sure full backup of the RDAF platform system is completed before performing the upgrade.

Kubernetes: Please run the below backup command to take the backup of application data.

Run the below command on RDAF Management system and make sure the Kubernetes PODs are NOT in restarting mode (it is applicable to only Kubernetes environment)

Note

If the following environment variables exist in values.yaml under the Alert_ingester and event_consumer services (on the CLI-installed VM, navigate to /opt/rdaf/deployment-scripts/values.yaml), please remove them

- INBOUND_PARTITION_WORKERS_MAX

- OUTBOUND_TOPIC_WORKERS_MAX

- OUTBOUND_WORKERS_MAX

- Verify that RDAF deployment

rdafcli version is 1.4.1 on the VM where CLI was installed for docker on-prem registry managing Kubernetes or Non-kubernetes deployments.

- On-premise docker registry service version is 1.0.4

0889e08f0871 docker1.cloudfabrix.io:443/external/docker-registry:1.0.4 "/entrypoint.sh /bin…" 7 days ago Up 7 days deployment-scripts-docker-registry-1

-

RDAF Infrastructure services version is 1.0.4 except for below services.

-

rda-minio: version is

RELEASE.2024-12-18T13-15-44Z

Run the below command to get rdafk8s Infra service details

+--------------------------+-----------------+-------------------+--------------+--------------------------------+

| Name | Host | Status | Container Id | Tag |

+--------------------------+-----------------+-------------------+--------------+--------------------------------+

| rda-nats | 192.168.108.114 | Up 19 Minutes ago | bbb50d2dacc5 | 1.0.4 |

| rda-minio | 192.168.108.114 | Up 19 Minutes ago | d26148d4bf44 | RELEASE.2024-12-18T13-15-44Z |

| rda-mariadb | 192.168.108.114 | Up 19 Minutes ago | 02975e0eec89 | 1.0.4 |

| rda-opensearch | 192.168.108.114 | Up 18 Minutes ago | 1494be76f694 | 1.0.4 |

+--------------------------+-----------------+-------------------+--------------+--------------------------------+

- RDAF Platform services version is 8.1.0.1

Run the below command to get RDAF Platform services details

+---------------+-----------------+---------------+--------------+---------+

| Name | Host | Status | Container Id | Tag |

+---------------+-----------------+---------------+--------------+---------+

| rda-api- | 192.168.108.119 | Up 14 Hours | 2ca4370a175a | 8.1.0.1 |

| server | | ago | | |

| rda-api- | 192.168.108.120 | Up 14 Hours | cce0d6bcba36 | 8.1.0.1 |

| server | | ago | | |

| rda-registry | 192.168.108.120 | Up 14 Hours | e029a9ff96fe | 8.1.0.1 |

| | | ago | | |

| rda-registry | 192.168.108.119 | Up 14 Hours | eacbc82ae8c9 | 8.1.0.1 |

| | | ago | | |

| rda-identity | 192.168.108.120 | Up 14 Hours | 45409c977c7c | 8.1.0.1 |

| | | ago | | |

| rda-identity | 192.168.108.119 | Up 14 Hours | 584458932e2c | 8.1.0.1 |

+---------------+-----------------+---------------+--------------+---------+

- RDAF Worker version is 8.1.0.1

Run the below command to get RDAF Worker details

+------------+----------------+------------+--------------+---------+

| Name | Host | Status | Container Id | Tag |

+------------+----------------+------------+--------------+---------+

| rda_worker | 192.168.125.63 | Up 7 weeks | cfe1fe65c692 | 8.1.0.1 |

+------------+----------------+------------+--------------+---------+

- RDAF OIA Application services version is 8.1.0.1

Run the below command to get RDAF App services details

+-------------------------------+-----------------+-----------------+--------------+---------+

| Name | Host | Status | Container Id | Tag |

+-------------------------------+-----------------+-----------------+--------------+---------+

| rda-alert-correlator | 192.168.108.118 | Up 14 Hours ago | afdbbe6453e4 | 8.1.0.1 |

| rda-alert-correlator | 192.168.108.117 | Up 14 Hours ago | 631b7978dcb0 | 8.1.0.1 |

| rda-alert-ingester | 192.168.108.117 | Up 14 Hours ago | 33322e0b9cb9 | 8.1.0.1 |

| rda-alert-ingester | 192.168.108.118 | Up 14 Hours ago | 8178c043bd04 | 8.1.0.1 |

| rda-alert-processor | 192.168.108.117 | Up 14 Hours ago | b342b582ea1d | 8.1.0.1 |

| rda-alert-processor | 192.168.108.118 | Up 14 Hours ago | b6f85413c2df | 8.1.0.1 |

+-------------------------------+-----------------+-----------------+--------------+---------+

Currently deployed CLI and RDAF services are running the below versions.

-

RDAF Deployment CLI version: 1.4.1

-

Infra Services tag: 1.0.4

-

Platform Services and RDA Worker tag: 8.1.0.1

-

OIA Application Services tag: 8.1.0.1

-

CloudFabrix recommends taking VMware VM snapshots where RDA Fabric infra/platform/applications are deployed

Note

- Check the Disk space of all the Platform and Service Vm's using the below mentioned command, the highlighted disk size should be less than 80%

rdauser@oia-125-216:~/collab-3.7-upgrade$ df -kh

Filesystem Size Used Avail Use% Mounted on

udev 32G 0 32G 0% /dev

tmpfs 6.3G 357M 6.0G 6% /run

/dev/mapper/ubuntu--vg-ubuntu--lv 48G 12G 34G 26% /

tmpfs 32G 0 32G 0% /dev/shm

tmpfs 5.0M 0 5.0M 0% /run/lock

tmpfs 32G 0 32G 0% /sys/fs/cgroup

/dev/loop0 64M 64M 0 100% /snap/core20/2318

/dev/loop2 92M 92M 0 100% /snap/lxd/24061

/dev/sda2 1.5G 309M 1.1G 23% /boot

/dev/sdf 50G 3.8G 47G 8% /var/mysql

/dev/loop3 39M 39M 0 100% /snap/snapd/21759

/dev/sdg 50G 541M 50G 2% /192.168-data

/dev/loop4 92M 92M 0 100% /snap/lxd/29619

/dev/loop5 39M 39M 0 100% /snap/snapd/21465

/dev/sde 15G 140M 15G 1% /zookeeper

/dev/sdd 30G 884M 30G 3% /kafka-logs

/dev/sdc 50G 3.3G 47G 7% /opt

/dev/sdb 50G 29G 22G 57% /var/lib/docker

/dev/sdi 25G 294M 25G 2% /graphdb

/dev/sdh 50G 34G 17G 68% /opensearch

/dev/loop6 64M 64M 0 100% /snap/core20/2379

Warning

Make sure all of the above pre-requisites are met before proceeding with the upgrade process.

Warning

Non-Kubernetes: Upgrading RDAF Platform and AIOps application services is a disruptive operation. Schedule a maintenance window before upgrading RDAF Platform and AIOps services to newer version.

Important

Please make sure full backup of the RDAF platform system is completed before performing the upgrade.

Non-Kubernetes: Please run the below backup command to take the backup of application data.

Note: Please make sure this backup-dir is mounted across all infra,cli vms.Note

If the following environment variables exist in values.yaml under the Alert_ingester and event_consumer services (on the CLI-installed VM, navigate to /opt/rdaf/deployment-scripts/values.yaml), please remove them

- INBOUND_PARTITION_WORKERS_MAX

- OUTBOUND_TOPIC_WORKERS_MAX

- OUTBOUND_WORKERS_MAX

- Verify that RDAF deployment

rdafcli version is 1.4.1 on the VM where CLI was installed for docker on-prem registry managing Kubernetes or Non-kubernetes deployments.

- On-premise docker registry service version is 1.0.4

173d38eebeab docker1.cloudfabrix.io:443/external/docker-registry:1.0.4 "/entrypoint.sh /bin…" 45 hours ago Up 45 hours deployment-scripts-docker-registry-1

-

RDAF Infrastructure services version is 1.0.4 except for below services.

-

rda-minio: version is

RELEASE.2024-12-18T13-15-44Z

Run the below command to get RDAF Infra service details

+-------------------+----------------+-------------+--------------+------------------------------+

| Name | Host | Status | Container Id | Tag |

+-------------------+----------------+-------------+--------------+------------------------------+

| nats | 192.168.125.63 | Up 2 months | aff2eb1f37c9 | 1.0.4 |

| minio | 192.168.125.63 | Up 2 months | ed6bb3ea036a | RELEASE.2024-12-18T13-15-44Z |

| mariadb | 192.168.125.63 | Up 2 months | 616a98d6471c | 1.0.4 |

| opensearch | 192.168.125.63 | Up 2 months | 7edeede52a9b | 1.0.4 |

| kafka | 192.168.125.63 | Up 2 months | d1426429da4c | 1.0.4 |

| graphdb[operator] | 192.168.125.63 | Up 2 months | 8a53795f6ee4 | 1.0.4 |

| graphdb[server] | 192.168.125.63 | Up 2 months | 06c187c7dfa2 | 1.0.4 |

| haproxy | 192.168.125.63 | Up 2 months | fde40536be0c | 1.0.4 |

+-------------------+----------------+-------------+--------------+------------------------------+

- RDAF Platform services version is 8.1.0.1

Run the below command to get RDAF Platform services details

+--------------------------+----------------+------------+--------------+---------+

| Name | Host | Status | Container Id | Tag |

+--------------------------+----------------+------------+--------------+---------+

| rda_api_server | 192.168.125.63 | Up 7 weeks | c6500e23738f | 8.1.0.1 |

| rda_registry | 192.168.125.63 | Up 7 weeks | 34f008691fd4 | 8.1.0.1 |

| rda_scheduler | 192.168.125.63 | Up 7 weeks | 8b358f65a7d3 | 8.1.0.1 |

| rda_collector | 192.168.125.63 | Up 7 weeks | 1888441693c0 | 8.1.0.1 |

| rda_identity | 192.168.125.63 | Up 7 weeks | 10e43ae93430 | 8.1.0.1 |

| rda_asm | 192.168.125.63 | Up 7 weeks | f98c2c79539a | 8.1.0.1 |

+--------------------------+----------------+------------+--------------+---------+

- RDAF Worker version is 8.1.0.1

Run the below command to get RDAF Worker details

+------------+----------------+------------+--------------+---------+

| Name | Host | Status | Container Id | Tag |

+------------+----------------+------------+--------------+---------+

| rda_worker | 192.168.125.63 | Up 7 weeks | bc46556f64d2 | 8.1.0.1 |

+------------+----------------+------------+--------------+---------+

- RDAF OIA Application services version is 8.1.0.1

Run the below command to get RDAF App services details

+-----------------------------------+----------------+------------+--------------+---------+

| Name | Host | Status | Container Id | Tag |

+-----------------------------------+----------------+------------+--------------+---------+

| cfx-rda-app-controller | 192.168.125.63 | Up 7 weeks | 1bae5abb4e9c | 8.1.0.1 |

| cfx-rda-reports-registry | 192.168.125.63 | Up 7 weeks | 925a97ecb0a3 | 8.1.0.1 |

| cfx-rda-notification-service | 192.168.125.63 | Up 7 weeks | 1628da0a7a30 | 8.1.0.1 |

| cfx-rda-file-browser | 192.168.125.63 | Up 7 weeks | 237c85c6cb9f | 8.1.0.1 |

| cfx-rda-configuration-service | 192.168.125.63 | Up 7 weeks | 0fe8f3ee7596 | 8.1.0.1 |

| cfx-rda-alert-ingester | 192.168.125.63 | Up 7 weeks | d58452342e72 | 8.1.0.1 |

| cfx-rda-webhook-server | 192.168.125.63 | Up 7 weeks | f3578f725d9c | 8.1.0.1 |

+-----------------------------------+----------------+------------+--------------+---------+

1.2. Upgrade Steps

1.2.1 Download the new Docker Images

Login into the VM where rdaf deployment CLI was installed for docker on-premise registry and managing kubernetes & Non-kubernetes deployment.

Download the new docker image tags for RDAF Platform and OIA (AIOps) Application services and wait until all of the images are downloaded.

Note

If the Download of the images fail, Please re-execute the above command

Run the below command to verify above mentioned tags are downloaded for all of the RDAF Platform and OIA (AIOps) Application services.

Please make sure 8.1.0.2 image tag is downloaded for the below RDAF Platform services.

- rda_asm

- rda_collector

- rda_api_server

- rda_worker

- Event Gateway

Please make sure 8.1.0.2 image tag is downloaded for the below RDAF OIA (AIOps) Application services.

- rda-alert-ingester

- rda-alert-processor

- rda-alert-processor-companion

Please make sure 8.1.0.2.1 image tag is downloaded for the below RDAF OIA (AIOps) Application services.

- rda-event-consumer

- rda-collaboration

Downloaded Docker images are stored under the below path.

/opt/rdaf-registry/data/docker/registry/v2/ or /opt/rdaf/data/docker/registry/v2/

Run the below command to check the filesystem's disk usage on offline registry VM where docker images are pulled.

If necessary, older image tags that are no longer in use can be deleted to free up disk space using the command below.

Note

Run the command below if /opt occupies more than 80% of the disk space or if the free capacity of /opt is less than 25GB.

1.2.2 Upgrade RDAF Platform Services

Step-1: Run the below command to initiate upgrading the following RDAF Platform services.

rdafk8s platform upgrade --tag 8.1.0.2 --service rda-api-server --service rda-asm --service rda-collector

As the upgrade procedure is a non-disruptive upgrade, it puts the currently running PODs into Terminating state and newer version PODs into Pending state.

Step-2: Run the below command to check the status of the existing and newer PODs and make sure atleast one instance of each Platform service is in Terminating state.

Step-3: Run the below command to put all Terminating RDAF platform service PODs into maintenance mode. It will list all of the POD Ids of platform services along with rdac maintenance command that required to be put in maintenance mode.

Note

If maint_command.py script doesn't exist on RDAF deployment CLI VM, it can be downloaded using the below command.

Step-4: Copy & Paste the rdac maintenance command as below.

Step-5: Run the below command to verify the maintenance mode status of the RDAF platform services.

Step-6: Run the below command to delete the Terminating RDAF platform service PODs

for i in `kubectl get pods -n rda-fabric -l app_category=rdaf-platform | grep 'Terminating' | awk '{print $1}'`; do kubectl delete pod $i -n rda-fabric --force; done

Note

Wait for 120 seconds and Repeat above steps from Step-2 to Step-6 for rest of the RDAF Platform service PODs.

Please wait till the new platform service PODs are in Running state and run the below command to verify their status and make sure they are running with 8.1.0.2 version.

+----------------------+-----------------+----------------+--------------+----------+

| Name | Host | Status | Container Id | Tag |

+----------------------+-----------------+----------------+--------------+----------+

| rda-api-server | 192.168.108.117 | Up 5 Hours ago | fda327eb1c5b | 8.1.0.2 |

| rda-api-server | 192.168.108.118 | Up 5 Hours ago | 4ea8906a4fbc | 8.1.0.2 |

| rda-registry | 192.168.108.117 | Up 1 Days ago | 2b40e501b898 | 8.1.0.1 |

| rda-registry | 192.168.108.118 | Up 1 Days ago | 2ab897175da3 | 8.1.0.1 |

| rda-identity | 192.168.108.117 | Up 1 Days ago | 772db9d128e4 | 8.1.0.1 |

| rda-identity | 192.168.108.118 | Up 1 Days ago | f5f947988c7a | 8.1.0.1 |

| rda-fsm | 192.168.108.118 | Up 1 Days ago | 36cb8d7c2fd2 | 8.1.0.1 |

| rda-fsm | 192.168.108.117 | Up 1 Days ago | f0bdaad2af77 | 8.1.0.1 |

| rda-asm | 192.168.108.117 | Up 5 Hours ago | ac3226389b66 | 8.1.0.2 |

| rda-asm | 192.168.108.118 | Up 5 Hours ago | f93533c4805a | 8.1.0.2 |

| rda-chat-helper | 192.168.108.118 | Up 1 Days ago | 275bdcf1b39a | 8.1.0.1 |

| rda-chat-helper | 192.168.108.117 | Up 1 Days ago | 3f82a2bb8c77 | 8.1.0.1 |

| rda-access-manager | 192.168.108.117 | Up 1 Days ago | 4570536616a4 | 8.1.0.1 |

| rda-access-manager | 192.168.108.118 | Up 1 Days ago | 5f8d95194a0e | 8.1.0.1 |

| rda-resource-manager | 192.168.108.118 | Up 1 Days ago | 01b77acafb0f | 8.1.0.1 |

| rda-resource-manager | 192.168.108.117 | Up 1 Days ago | db544835c22a | 8.1.0.1 |

| rda-scheduler | 192.168.108.118 | Up 1 Days ago | 2103b3a7f586 | 8.1.0.1 |

| rda-scheduler | 192.168.108.117 | Up 1 Days ago | 81de432b6ab3 | 8.1.0.1 |

| rda-collector | 192.168.108.118 | Up 5 Hours ago | b0527f543a3f | 8.1.0.2 |

| rda-collector | 192.168.108.117 | Up 5 Hours ago | bc0c48539795 | 8.1.0.2 |

+----------------------+-----------------+----------------+--------------+----------+

Run the below command to check the rda-scheduler service is elected as a leader under Site column.

+-------+----------------------------------------+-------------+--------------+----------+-------------+-----------------+--------+--------------+---------------+--------------+

| Cat | Pod-Type | Pod-Ready | Host | ID | Site | Age | CPUs | Memory(GB) | Active Jobs | Total Jobs |

|-------+----------------------------------------+-------------+--------------+----------+-------------+-----------------+--------+--------------+---------------+--------------|

| Infra | api-server | True | rda-api-server | 9c0484af | | 11:41:50 | 8 | 31.33 | | |

| Infra | api-server | True | rda-api-server | 196558ed | | 11:40:23 | 8 | 31.33 | | |

| Infra | asm | True | rda-asm-5b8fb9 | bcbdaae5 | | 11:42:26 | 8 | 31.33 | | |

| Infra | asm | True | rda-asm-5b8fb9 | 232a58af | | 11:42:40 | 8 | 31.33 | | |

| Infra | collector | True | rda-collector- | d06fb56c | | 11:42:03 | 8 | 31.33 | | |

| Infra | collector | True | rda-collector- | a4c79e4c | | 11:41:59 | 8 | 31.33 | | |

| Infra | registry | True | rda-registry-6 | 2fd69950 | | 11:42:03 | 8 | 31.33 | | |

| Infra | registry | True | rda-registry-6 | fac544d6 | | 11:41:59 | 8 | 31.33 | | |

| Infra | scheduler | True | rda-scheduler- | b98afe88 | *leader* | 11:42:01 | 8 | 31.33 | | |

| Infra | scheduler | True | rda-scheduler- | e25a0841 | | 11:41:56 | 8 | 31.33 | | |

| Infra | worker | True | rda-worker-5b5 | 99bd054e | rda-site-01 | 11:33:40 | 8 | 31.33 | 0 | 0 |

| Infra | worker | True | rda-worker-5b5 | 0bfdcd98 | rda-site-01 | 11:33:34 | 8 | 31.33 | 0 | 0 |

+-------+----------------------------------------+-------------+----------------+----------+-------------+----------+--------+--------------+---------------+--------------+

Run the below command to check if all services has ok status and does not throw any failure messages.

Warning

For Non-Kubernetes deployment, upgrading RDAF Platform and AIOps application services is a disruptive operation when rolling-upgrade option is not used. Please schedule a maintenance window before upgrading RDAF Platform and AIOps services to newer version.

Run the below command to initiate upgrading the following RDAF Platform services with zero downtime

rdaf platform upgrade --tag 8.1.0.2 --rolling-upgrade --timeout 10 --service rda_collector --service rda_asm --service rda_api_server

Note

timeout <10> mentioned in the above command represents as Seconds

Note

The rolling-upgrade option upgrades the Platform services running in high-availability mode on one VM at a time in sequence. It completes the upgrade of Platform services running on VM-1 before upgrading them on VM-2, followed by VM-3, and so on.

During this upgrade sequence, RDAF platform continues to function without any impact to the application traffic.

After completing the Platform services upgrade on all VMs, it will ask for user confirmation to delete the older version Platform service PODs. The user has to provide YES to delete the old docker containers (in non-k8s)

192.168.108.122:5000/ubuntu-rda-client-api-server:8.1.0.2

2025-10-30 10:32:43,693 [rdaf.component.platform] INFO - Gathering platform container details.

2025-10-30 10:32:44,246 [rdaf.component.platform] INFO - Gathering rdac pod details.

+----------+------------+---------+---------+--------------+-------------+------------+

| Pod ID | Pod Type | Version | Age | Hostname | Maintenance | Pod Status |

+----------+------------+---------+---------+--------------+-------------+------------+

| 7a4a239a | collector | 8.1.0.1 | 4:02:59 | a46780fed2d5 | None | True |

| fc561b34 | asm | 8.1.0.1 | 4:01:55 | bf6e4f48aa1e | None | True |

| 16bfb91a | api-server | 8.1.0.1 | 4:04:33 | 51faaac76f07 | None | True |

+----------+------------+---------+---------+--------------+-------------+------------+

Continue moving above pods to maintenance mode? [yes/no]: yes

2025-10-30 10:33:00,533 [rdaf.component.platform] INFO - Initiating Maintenance Mode...

2025-10-30 10:33:25,621 [rdaf.component.platform] INFO - Following container are in maintenance mode

+----------+------------+---------+---------+--------------+-------------+------------+

| Pod ID | Pod Type | Version | Age | Hostname | Maintenance | Pod Status |

+----------+------------+---------+---------+--------------+-------------+------------+

| 16bfb91a | api-server | 8.1.0.1 | 4:05:08 | 51faaac76f07 | maintenance | False |

| fc561b34 | asm | 8.1.0.1 | 4:02:30 | bf6e4f48aa1e | maintenance | False |

| 7a4a239a | collector | 8.1.0.1 | 4:03:34 | a46780fed2d5 | maintenance | False |

+----------+------------+---------+---------+--------------+-------------+------------+

2025-10-30 10:33:25,622 [rdaf.component.platform] INFO - Waiting for timeout of 2 seconds...

2025-10-30 10:33:27,622 [rdaf.component.platform] INFO - Upgrading service: rda_collector on host 192.168.

Run the below command to initiate upgrading RDAF Platform services without zero downtime

rdaf platform upgrade --tag 8.1.0.2 --service rda_collector --service rda_asm --service rda_api_server

Please wait till all of the new platform services are in Up state and run the below command to verify their status and make sure all of them are running with 8.1.0.2 version.

+--------------------------+-----------------+-------------+--------------+---------+

| Name | Host | Status | Container Id | Tag |

+--------------------------+-----------------+-------------+--------------+---------+

| rda_api_server | 192.168.105.130 | Up 20 hours | 7214b4d63780 | 8.1.0.2 |

| rda_registry | 192.168.105.130 | Up 3 days | 6bbd6cd76549 | 8.1.0.1 |

| rda_scheduler | 192.168.105.130 | Up 2 days | 8e698a963876 | 8.1.0.1 |

| rda_collector | 192.168.105.130 | Up 20 hours | b8dab49e5e04 | 8.1.0.2 |

| rda_identity | 192.168.105.130 | Up 3 days | 51948b4b8a03 | 8.1.0.1 |

| rda_asm | 192.168.105.130 | Up 20 hours | c1c44fcdf83a | 8.1.0.2 |

| rda_fsm | 192.168.105.130 | Up 3 days | faa694ab225c | 8.1.0.1 |

| rda_chat_helper | 192.168.105.130 | Up 3 days | c86de27dff97 | 8.1.0.1 |

| cfx-rda-access-manager | 192.168.105.130 | Up 3 days | 0be5d7a6251c | 8.1.0.1 |

| cfx-rda-resource-manager | 192.168.105.130 | Up 3 days | 6d81351a9c75 | 8.1.0.1 |

| cfx-rda-user-preferences | 192.168.105.130 | Up 3 days | 2651db4f2672 | 8.1.0.1 |

| portal-backend | 192.168.105.130 | Up 3 days | e87d7be3ebd7 | 8.1.0.1 |

| portal-frontend | 192.168.105.130 | Up 3 days | 615e00b4b904 | 8.1.0.1 |

+--------------------------+-----------------+-------------+--------------+---------+

Run the below command to check the rda-scheduler service is elected as a leader under Site column.

| Infra | scheduler | True | ec86b669f564 | f72697c7 | *leader* | 4:14:29 | 8 | 62.75 |

| Infra | scheduler | True | 7772293e6644 | 685f725f | | 4:14:14 | 8 | 62.75 |

Run the below command to check if all services has ok status and does not throw any failure messages.

+-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-------------------------------------------------------------+

| Cat | Pod-Type | Host | ID | Site | Health Parameter | Status | Message |

|-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-------------------------------------------------------------|

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | service-status | ok | |

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | 192.168-connectivity | ok | |

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | service-dependency:configuration-service | ok | 2 pod(s) found for configuration-service |

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | service-initialization-status | ok | |

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | kafka-connectivity | ok | Cluster=NTc1NWU1MTQxYmY3MTFlZg, Broker=1, Brokers=[1, 2, 3] |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | service-status | ok | |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | 192.168-connectivity | ok | |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | service-dependency:configuration-service | ok | 2 pod(s) found for configuration-service |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | service-initialization-status | ok | |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | kafka-connectivity | ok | Cluster=NTc1NWU1MTQxYmY3MTFlZg, Broker=3, Brokers=[1, 2, 3] |

| rda_app | alert-processor | c6cc7b04ab33 | b4ebfb06 | | service-status | ok | |

| rda_app | alert-processor | c6cc7b04ab33 | b4ebfb06 | | 192.168-connectivity | ok | |

+-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-------------------------------------------------------------+

1.2.3 Upgrade RDA Worker Services

Note

If the worker was deployed in a HTTP proxy environment, please make sure the required HTTP proxy environment variables are added in /opt/rdaf/deployment-scripts/values.yaml file under rda_worker configuration section as shown below before upgrading RDA Worker services.

rda_worker:

terminationGracePeriodSeconds: 300

replicas: 6

sizeLimit: 1024Mi

privileged: true

resources:

requests:

memory: 100Mi

limits:

memory: 24Gi

env:

WORKER_GROUP: rda-prod-01

CAPACITY_FILTER: cpu_load1 <= 7.0 and mem_percent < 95

MAX_PROCESSES: '1000'

RDA_ENABLE_TRACES: 'no'

WORKER_PUBLIC_ACCESS: 'true'

DISABLE_REMOTE_LOGGING_CONTROL: 'no'

RDA_SELF_HEALTH_RESTART_AFTER_FAILURES: 3

extraEnvs:

- name: http_proxy

value: http://test:[email protected]:3128

- name: https_proxy

value: http://test:[email protected]:3128

- name: HTTP_PROXY

value: http://test:[email protected]:3128

- name: HTTPS_PROXY

value: http://test:[email protected]:3128

....

....

Step-1: Please run the below command to initiate upgrading the RDA Worker service PODs.

Step-2: Run the below command to check the status of the existing and newer PODs and make sure atleast one instance of each RDA Worker service POD is in Terminating state.

NAME READY STATUS RESTARTS AGE

rda-worker-7c5b47bfc8-fdwb4 1/1 Running 0 6m23s

rda-worker-7c5b47bfc8-pcghs 1/1 Running 0 5m8s

Step-3: Run the below command to put all Terminating RDAF worker service PODs into maintenance mode. It will list all of the POD Ids of RDA worker services along with rdac maintenance command that is required to be put in maintenance mode.

Step-4: Copy & Paste the rdac maintenance command as below.

Step-5: Run the below command to verify the maintenance mode status of the RDAF worker services.

Step-6: Run the below command to delete the Terminating RDAF worker service PODs

for i in `kubectl get pods -n rda-fabric -l app_component=rda-worker | grep 'Terminating' | awk '{print $1}'`; do kubectl delete pod $i -n rda-fabric --force; done

Note

Wait for 120 seconds between each RDAF worker service upgrade by repeating above steps from Step-2 to Step-6 for rest of the RDAF worker service PODs.

Step-7: Please wait for 120 seconds to let the newer version of RDA Worker service PODs join the RDA Fabric appropriately. Run the below commands to verify the status of the newer RDA Worker service PODs.

+------------+----------------+-------------------+--------------+---------+

| Name | Host | Status | Container Id | Tag |

+------------+----------------+-------------------+--------------+---------+

| rda-worker | 192.168.108.17 | Up 6 Minutes ago | cfcca2c11c9a | 8.1.0.2 |

| rda-worker | 192.168.108.18 | Up 5 Minutes ago | 209598b9d921 | 8.1.0.2 |

+------------+----------------+-------------------+--------------+---------+

Step-8: Run the below command to check if all RDA Worker services has ok status and does not throw any failure messages.

Note

If the worker was deployed in a HTTP proxy environment, please make sure the required HTTP proxy environment variables are added in /opt/rdaf/deployment-scripts/values.yaml file under rda_worker configuration section as shown below before upgrading RDA Worker services.

rda_worker:

mem_limit: 8G

memswap_limit: 8G

privileged: false

environment:

RDA_ENABLE_TRACES: 'no'

RDA_SELF_HEALTH_RESTART_AFTER_FAILURES: 3

http_proxy: "http://test:[email protected]:3128"

https_proxy: "http://test:[email protected]:3128"

HTTP_PROXY: "http://test:[email protected]:3128"

HTTPS_PROXY: "http://test:[email protected]:3128"

- Upgrade RDA Worker Services

Please run the below command to initiate upgrading the RDA Worker Service with zero downtime

Note

timeout <10> mentioned in the above command represents as seconds

Note

The rolling-upgrade option upgrades the Worker services running in high-availability mode on one VM at a time in sequence. It completes the upgrade of Worker services running on VM-1 before upgrading them on VM-2, followed by VM-3, and so on.

After completing the Worker services upgrade on all VMs, it will ask for user confirmation, the user has to provide YES to delete the older version Worker service PODs.

Digest: sha256:728962901928f166dfc1a3d5d7ad931c133621d1abac598e140af3249905836c

Status: Downloaded newer image for 192.168.108.122:5000/ubuntu-rda-worker-all:8.1.0.2

192.168.108.122:5000/ubuntu-rda-worker-all:8.1.0.2

2025-10-30 10:52:34,199 [rdaf.component.worker] INFO - Collecting worker details for rolling upgrade

2025-10-30 10:52:45,508 [rdaf.component.worker] INFO - Rolling upgrade worker on 192.168.108.127

+----------+----------+---------+---------+--------------+-------------+------------+

| Pod ID | Pod Type | Version | Age | Hostname | Maintenance | Pod Status |

+----------+----------+---------+---------+--------------+-------------+------------+

| c2544331 | worker | 8.1.0.1 | 4:10:38 | 5fac8ebe85fe | None | True |

+----------+----------+---------+---------+--------------+-------------+------------+

Continue moving above pod to maintenance mode? [yes/no]: yes

2025-10-30 10:54:30,732 [rdaf.component.worker] INFO - Initiating maintenance mode for pod c2544331

2025-10-30 10:54:52,747 [rdaf.component.worker] INFO - Following worker container is in maintenance mode

+----------+----------+---------+---------+--------------+-------------+------------+

| Pod ID | Pod Type | Version | Age | Hostname | Maintenance | Pod Status |

+----------+----------+---------+---------+--------------+-------------+------------+

| c2544331 | worker | 8.1.0.1 | 4:12:43 | 5fac8ebe85fe | maintenance | False |

+----------+----------+---------+---------+--------------+-------------+------------+

Please run the below command to initiate upgrading the RDA Worker Service without zero downtime

Please wait for 120 seconds to let the newer version of RDA Worker service containers join the RDA Fabric appropriately. Run the below commands to verify the status of the newer RDA Worker service containers.

| Infra | worker | True | 6eff605e72c4 | a318f394 | rda-site-01 | 13:45:13 | 4 | 31.21 | 0 | 0 |

| Infra | worker | True | ae7244d0d10a | 554c2cd8 | rda-site-01 | 13:40:40 | 4 | 31.21 | 0 | 0 |

+------------+-----------------+--------------+--------------+---------+

| Name | Host | Status | Container Id | Tag |

+------------+---------------- +--------------+--------------+---------+

| rda_worker | 192.168.108.127 | Up 5 minutes | aa6a0511ec07 | 8.1.0.2 |

| rda_worker | 192.168.108.128 | Up 3 minutes | dc48f06b1778 | 8.1.0.2 |

+------------+-----------------+--------------+--------------+---------+

Run the below command to check if all RDA Worker services has ok status and does not throw any failure messages.

+-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-----------------------------------------------------------------------------------------------------------------------------+

| Cat | Pod-Type | Host | ID | Site | Health Parameter | Status | Message |

|-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-----------------------------------------------------------------------------------------------------------------------------|

| rda_infra | api-server | 1b0542719618 | 1845ae67 | | service-status | ok | |

| rda_infra | api-server | 1b0542719618 | 1845ae67 | | 192.168-connectivity | ok | |

| rda_infra | api-server | d4404cffdc7a | a4cfdc6d | | service-status | ok | |

| rda_infra | api-server | d4404cffdc7a | a4cfdc6d | | 192.168-connectivity | ok | |

| rda_infra | asm | 8d3d52a7a475 | 418c9dc1 | | service-status | ok | |

| rda_infra | asm | 8d3d52a7a475 | 418c9dc1 | | 192.168-connectivity | ok | |

| rda_infra | asm | ab172a9b8229 | 2ac1d67a | | service-status | ok | |

| rda_infra | asm | ab172a9b8229 | 2ac1d67a | | 192.168-connectivity | ok | |

| rda_app | asset-dependency | 6ac69ca1085c | c2e9dcb9 | | service-status | ok | |

| rda_app | asset-dependency | 6ac69ca1085c | c2e9dcb9 | | 192.168-connectivity | ok | |

| rda_app | asset-dependency | 58a5f4f460d3 | 0b91caac | | service-status | ok | |

| rda_app | asset-dependency | 58a5f4f460d3 | 0b91caac | | 192.168-connectivity | ok | |

| rda_app | authenticator | 9011c2aef498 | 9f7efdc3 | | service-status | ok | |

| rda_app | authenticator | 9011c2aef498 | 9f7efdc3 | | 192.168-connectivity | ok | |

| rda_app | authenticator | 9011c2aef498 | 9f7efdc3 | | DB-connectivity | ok | |

| rda_app | authenticator | 148621ed8c82 | dbf16b82 | | service-status | ok | |

| rda_app | authenticator | 148621ed8c82 | dbf16b82 | | 192.168-connectivity | ok | |

| rda_app | authenticator | 148621ed8c82 | dbf16b82 | | DB-connectivity | ok | |

| rda_app | cfx-app-controller | 75ec0f30cfa3 | 1198fdee | | service-status | ok | |

| rda_app | cfx-app-controller | 75ec0f30cfa3 | 1198fdee | | 192.168-connectivity | ok | |

| rda_app | cfx-app-controller | 75ec0f30cfa3 | 1198fdee | | service-initialization-status | ok | |

| rda_app | cfx-app-controller | 75ec0f30cfa3 | 1198fdee | | DB-connectivity | ok |

+-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-----------------------------------------------------------------------------------------------------------------------------+

1.2.4 Upgrade OIA Application Services

Step-1: Run the below commands to initiate upgrading the following RDAF OIA Application services

rdafk8s app upgrade OIA --tag 8.1.0.2 --service rda-alert-ingester --service rda-alert-processor --service rda-alert-correlator --service rda-alert-processor-companion

Step-2: Run the below command to check the status of the newly upgraded PODs.

Step-3: Run the below command to put all Terminating OIA application service PODs into maintenance mode. It will list all of the POD Ids of OIA application services along with rdac maintenance command that are required to be put in maintenance mode.

Step-4: Copy & Paste the rdac maintenance command as below.

Step-5: Run the below command to verify the maintenance mode status of the OIA application services.

Step-6: Run the below command to delete the Terminating OIA application service PODs

for i in `kubectl get pods -n rda-fabric -l app_name=oia | grep 'Terminating' | awk '{print $1}'`; do kubectl delete pod $i -n rda-fabric --force; done

Note

Wait for 120 seconds and Repeat above steps from Step-2 to Step-6 for rest of the OIA application service PODs.

Please wait till all of the new OIA application service PODs are in Running state and run the below command to verify their status and make sure they are running with 8.1.0.2 / 8.1.0.2.1 version.

+-------------------------------+-----------------+-----------------+--------------+-----------+

| Name | Host | Status | Container Id | Tag |

+-------------------------------+-----------------+-----------------+--------------+-----------+

| rda-alert-correlator | 192.168.108.120 | Up 5 Hours ago | de58c823d265 | 8.1.0.2 |

| rda-alert-correlator | 192.168.108.119 | Up 5 Hours ago | 7ccfb9832d63 | 8.1.0.2 |

| rda-alert-ingester | 192.168.108.120 | Up 5 Hours ago | d9722596015a | 8.1.0.2 |

| rda-alert-ingester | 192.168.108.119 | Up 5 Hours ago | 2d73cfed8226 | 8.1.0.2 |

| rda-alert-processor | 192.168.108.120 | Up 5 Hours ago | 3349c4455841 | 8.1.0.2 |

| rda-alert-processor | 192.168.108.119 | Up 5 Hours ago | 3f17dde3eed2 | 8.1.0.2 |

| rda-alert-processor-companion | 192.168.108.119 | Up 5 Hours ago | ec87f1383f2a | 8.1.0.2 |

| rda-alert-processor-companion | 192.168.108.120 | Up 5 Hours ago | eda5b39c3da1 | 8.1.0.2 |

| rda-app-controller | 192.168.108.119 | Up 23 Hours ago | cb51cf3875ad | 8.1.0.1 |

| rda-app-controller | 192.168.108.120 | Up 23 Hours ago | 83b2d405f6ee | 8.1.0.1 |

| rda-collaboration | 192.168.108.119 | Up 5 Hours ago | a16102be5b3f | 8.1.0.2.1 |

| rda-collaboration | 192.168.108.120 | Up 5 Hours ago | b9779202b517 | 8.1.0.2.1 |

| rda-configuration-service | 192.168.108.119 | Up 23 Hours ago | 2666a70fd84b | 8.1.0.1 |

| rda-configuration-service | 192.168.108.120 | Up 23 Hours ago | fa90a76ec426 | 8.1.0.1 |

| rda-event-consumer | 192.168.108.120 | Up 5 Hours ago | 339cb5f787a7 | 8.1.0.2.1 |

| rda-event-consumer | 192.168.108.119 | Up 5 Hours ago | 85a539443123 | 8.1.0.2.1 |

+-------------------------------+-----------------+-----------------+--------------+-----------+

Step-7: Run the below command to verify all OIA application services are up and running.

+-------+----------------------------------------+-------------+----------------+----------+-------------+----------+--------+--------------+---------------+--------------+

| Cat | Pod-Type | Pod-Ready | Host | ID | Site | Age | CPUs | Memory(GB) | Active Jobs | Total Jobs |

|-------+----------------------------------------+-------------+----------------+----------+-------------+----------+--------+--------------+---------------+--------------|

| App | alert-ingester | True | rda-alert-inge | 6a6e464d | | 19:19:06 | 8 | 31.33 | | |

| App | alert-ingester | True | rda-alert-inge | 7f6b42a0 | | 19:19:23 | 8 | 31.33 | | |

| App | alert-processor | True | rda-alert-proc | a880e491 | | 19:19:51 | 8 | 31.33 | | |

| App | alert-processor | True | rda-alert-proc | b684609e | | 19:19:48 | 8 | 31.33 | | |

| App | alert-processor-companion | True | rda-alert-proc | 874f3b33 | | 19:18:54 | 8 | 31.33 | | |

| App | alert-processor-companion | True | rda-alert-proc | 70cadaa7 | | 19:18:35 | 8 | 31.33 | | |

| App | asset-dependency | True | rda-asset-depe | bde06c15 | | 19:44:20 | 8 | 31.33 | | |

| App | asset-dependency | True | rda-asset-depe | 47b9eb02 | | 19:44:08 | 8 | 31.33 | | |

| App | authenticator | True | rda-identity-d | faa33e1b | | 19:44:22 | 8 | 31.33 | | |

| App | authenticator | True | rda-identity-d | 36083c36 | | 19:44:16 | 8 | 31.33 | | |

| App | cfx-app-controller | True | rda-app-contro | 5fd3c3f4 | | 19:19:39 | 8 | 31.33 | | |

| App | cfx-app-controller | True | rda-app-contro | d66e5ce8 | | 19:19:26 | 8 | 31.33 | | |

| App | cfxdimensions-app-access-manager | True | rda-access-man | ecbb535c | | 19:44:16 | 8 | 31.33 | | |

| App | cfxdimensions-app-access-manager | True | rda-access-man | 9a05db5a | | 19:44:06 | 8 | 31.33 | | |

| App | cfxdimensions-app-collaboration | True | rda-collaborat | 61b3c53b | | 19:18:48 | 8 | 31.33 | | |

| App | cfxdimensions-app-collaboration | True | rda-collaborat | 09b9474e | | 19:18:27 | 8 | 31.33 | | |

+-------+----------------------------------------+-------------+----------------+----------+-------------+-------------------+--------+-----------------------------+--------------+

Run the below command to check if all services has ok status and does not throw any failure messages.

+-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-----------------------------------------------------------------------------------------------------------------------------+

| Cat | Pod-Type | Host | ID | Site | Health Parameter | Status | Message |

|-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-----------------------------------------------------------------------------------------------------------------------------|

| rda_app | alert-ingester | rda-alert-in | 6a6e464d | | service-status | ok | |

| rda_app | alert-ingester | rda-alert-in | 6a6e464d | | 192.168-connectivity | ok | |

| rda_app | alert-ingester | rda-alert-in | 6a6e464d | | service-dependency:configuration-service | ok | 2 pod(s) found for configuration-service |

| rda_app | alert-ingester | rda-alert-in | 6a6e464d | | service-initialization-status | ok | |

| rda_app | alert-ingester | rda-alert-in | 6a6e464d | | kafka-connectivity | ok | Cluster=dKnnkaYSPELK8DBUk0rPig, Broker=0, Brokers=[0, 1, 2] |

| rda_app | alert-ingester | rda-alert-in | 6a6e464d | | kafka-consumer | ok | Health: [{'387c0cb507b84878b9d0b15222cb4226.inbound-events': 0, '387c0cb507b84878b9d0b15222cb4226.mapped-events': 0}, {}] |

| rda_app | alert-ingester | rda-alert-in | 7f6b42a0 | | service-status | ok | |

| rda_app | alert-ingester | rda-alert-in | 7f6b42a0 | | 192.168-connectivity | ok | |

| rda_app | alert-ingester | rda-alert-in | 7f6b42a0 | | service-dependency:configuration-service | ok | 2 pod(s) found for configuration-service |

| rda_app | alert-ingester | rda-alert-in | 7f6b42a0 | | service-initialization-status | ok | |

| rda_app | alert-ingester | rda-alert-in | 7f6b42a0 | | kafka-consumer | ok | Health: [{'387c0cb507b84878b9d0b15222cb4226.inbound-events': 0, '387c0cb507b84878b9d0b15222cb4226.mapped-events': 0}, {}] |

| rda_app | alert-ingester | rda-alert-in | 7f6b42a0 | | kafka-connectivity | ok | Cluster=dKnnkaYSPELK8DBUk0rPig, Broker=1, Brokers=[0, 1, 2] |

| rda_app | alert-processor | rda-alert-pr | a880e491 | | service-status | ok | |

| rda_app | alert-processor | rda-alert-pr | a880e491 | | 192.168-connectivity | ok | |

| rda_app | alert-processor | rda-alert-pr | a880e491 | | service-dependency:cfx-app-controller | ok | 2 pod(s) found for cfx-app-controller |

| rda_app | alert-processor | rda-alert-pr | a880e491 | | service-dependency:configuration-service | ok | 2 pod(s) found for configuration-service |

| rda_app | alert-processor | rda-alert-pr | a880e491 | | service-initialization-status | ok | |

| rda_app | alert-processor | rda-alert-pr | a880e491 | | kafka-connectivity | ok | Cluster=dKnnkaYSPELK8DBUk0rPig, Broker=1, Brokers=[0, 1, 2] |

| rda_app | alert-processor | rda-alert-pr | a880e491 | | DB-connectivity | ok | |

+-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-------------------------------------------------------------+

Run the below commands to initiate upgrading the following RDA Fabric OIA Application services with zero downtime

rdaf app upgrade OIA --tag 8.1.0.2 --rolling-upgrade --timeout 10 --service cfx-rda-alert-ingester --service cfx-rda-alert-processor --service cfx-rda-alert-processor-companion --service cfx-rda-alert-correlator

rdaf app upgrade OIA --tag 8.1.0.2.1 --rolling-upgrade --timeout 10 --service cfx-rda-event-consumer --service cfx-rda-collaboration

Note

timeout <10> mentioned in the above command represents as Seconds

Note

The rolling-upgrade option upgrades the OIA application services running in high-availability mode on one VM at a time in sequence. It completes the upgrade of OIA application services running on VM-1 before upgrading them on VM-2, followed by VM-3, and so on.

After completing the OIA application services upgrade on all VMs, it will ask for user confirmation to delete the older version OIA application service PODs.

2025-10-30 11:23:36,923 [rdaf.component.oia] INFO - Gathering OIA app container details.

2025-10-30 11:23:38,026 [rdaf.component.oia] INFO - Gathering rdac pod details.

+----------+-----------------------+---------+---------+--------------+-------------+------------+

| Pod ID | Pod Type | Version | Age | Hostname | Maintenance | Pod Status |

+----------+-----------------------+---------+---------+--------------+-------------+------------+

| 1c14f6eb | alert-ingester | 8.1.0.1 | 4:29:25 | c236e62139f8 | None | True |

| 66ed0060 | alert-processor | 8.1.0.1 | 4:27:14 | c6672a224c54 | None | True |

| b95a768f | event-consumer | 8.1.0.1 | 4:27:48 | 3f4516b3e057 | None | True |

| acecb0b7 | alert-processor- | 8.1.0.1 | 4:23:44 | 02cf84e94ea7 | None | True |

| | companion | | | | | |

| 75c30a77 | alert-correlator | 8.1.0.1 | 4:26:41 | 895c6b108728 | None | True |

| 73cc3ae8 | cfxdimensions-app- | 8.1.0.1 | 4:24:55 | b8d988286bf3 | None | True |

| | collaboration | | | | | |

+----------+-----------------------+---------+---------+--------------+-------------+------------+

Continue moving above pods to maintenance mode? [yes/no]: yes

2025-10-30 11:23:55,371 [rdaf.component.oia] INFO - Initiating Maintenance Mode...

2025-10-30 11:24:18,171 [rdaf.component.oia] INFO - Following container are in maintenance mode

+----------+-----------------------+---------+---------+--------------+-------------+------------+

| Pod ID | Pod Type | Version | Age | Hostname | Maintenance | Pod Status |

+----------+-----------------------+---------+---------+--------------+-------------+------------+

| 75c30a77 | alert-correlator | 8.1.0.1 | 4:27:06 | 895c6b108728 | maintenance | False |

| 1c14f6eb | alert-ingester | 8.1.0.1 | 4:29:50 | c236e62139f8 | maintenance | False |

| 66ed0060 | alert-processor | 8.1.0.1 | 4:27:39 | c6672a224c54 | maintenance | False |

| acecb0b7 | alert-processor- | 8.1.0.1 | 4:24:09 | 02cf84e94ea7 | maintenance | False |

| | companion | | | | | |

| 73cc3ae8 | cfxdimensions-app- | 8.1.0.1 | 4:25:21 | b8d988286bf3 | maintenance | False |

| | collaboration | | | | | |

| b95a768f | event-consumer | 8.1.0.1 | 4:28:13 | 3f4516b3e057 | maintenance | False |

+----------+-----------------------+---------+---------+--------------+-------------+------------+

Run the below command to initiate upgrading the RDA Fabric OIA Application services without zero downtime

rdaf app upgrade OIA --tag 8.1.0.2 --service cfx-rda-alert-ingester --service cfx-rda-alert-processor --service cfx-rda-alert-processor-companion --service cfx-rda-alert-correlator

rdaf app upgrade OIA --tag 8.1.0.2.1 --service cfx-rda-event-consumer --service cfx-rda-collaboration

Please wait till all of the new OIA application service containers are in Up state and run the below command to verify their status and make sure they are running with 8.1.0.2 / 8.1.0.2.1 version.

+-----------------------------------+-----------------+-------------+--------------+-------------+

| Name | Host | Status | Container Id | Tag |

+-----------------------------------+-----------------+-------------+--------------+-------------+

| cfx-rda-app-controller | 192.168.105.131 | Up 3 days | 13bbf3d74c4e | 8.1.0.1 |

| cfx-rda-reports-registry | 192.168.105.131 | Up 3 days | 606bd2414e74 | 8.1.0.1 |

| cfx-rda-notification-service | 192.168.105.131 | Up 3 days | 70254879deaf | 8.1.0.1 |

| cfx-rda-file-browser | 192.168.105.131 | Up 3 days | ee3ac9c2604d | 8.1.0.1 |

| cfx-rda-configuration-service | 192.168.105.131 | Up 3 days | 648580eb4291 | 8.1.0.1 |

| cfx-rda-alert-ingester | 192.168.105.131 | Up 20 hours | 433ba299b339 | 8.1.0.2 |

| cfx-rda-webhook-server | 192.168.105.131 | Up 3 days | 55ce34134b88 | 8.1.0.1 |

| cfx-rda-smtp-server | 192.168.105.131 | Up 3 days | 7a2160d536f7 | 8.1.0.1 |

| cfx-rda-event-consumer | 192.168.105.131 | Up 20 hours | 8c17a6409151 | 8.1.0.2.1 |

| cfx-rda-alert-processor | 192.168.105.131 | Up 20 hours | 209deec4f209 | 8.1.0.2 |

| cfx-rda-alert-correlator | 192.168.105.131 | Up 20 hours | 0c9c99a2c64b | 8.1.0.2 |

| cfx-rda-irm-service | 192.168.105.131 | Up 3 days | 0833745ca4d9 | 8.1.0.1 |

| cfx-rda-ml-config | 192.168.105.131 | Up 3 days | b04b6b341a73 | 8.1.0.1 |

| cfx-rda-collaboration | 192.168.105.131 | Up 20 hours | 89dbb9a7fd2a | 8.1.0.2.1 |

| cfx-rda-ingestion-tracker | 192.168.105.131 | Up 3 days | 6df3071b5101 | 8.1.0.1 |

| cfx-rda-alert-processor-companion | 192.168.105.131 | Up 20 hours | c0b6577c9ca6 | 8.1.0.2 |

+-----------------------------------+-----------------+-------------+--------------+-------------+

Run the below command to verify all OIA application services are up and running.

+-------+----------------------------------------+-------------+----------------+----------+-------------+----------+--------+--------------+---------------+--------------+

| Cat | Pod-Type | Pod-Ready | Host | ID | Site | Age | CPUs | Memory(GB) | Active Jobs | Total Jobs |

|-------+----------------------------------------+-------------+----------------+----------+-------------+----------+--------+--------------+---------------+--------------|

| App | alert-ingester | True | rda-alert-inge | 6a6e464d | | 19:22:36 | 8 | 31.33 | | |

| App | alert-ingester | True | rda-alert-inge | 7f6b42a0 | | 19:22:53 | 8 | 31.33 | | |

| App | alert-processor | True | rda-alert-proc | a880e491 | | 19:23:21 | 8 | 31.33 | | |

| App | alert-processor | True | rda-alert-proc | b684609e | | 19:23:18 | 8 | 31.33 | | |

| App | alert-processor-companion | True | rda-alert-proc | 874f3b33 | | 19:22:24 | 8 | 31.33 | | |

| App | alert-processor-companion | True | rda-alert-proc | 70cadaa7 | | 19:22:05 | 8 | 31.33 | | |

| App | asset-dependency | True | rda-asset-depe | bde06c15 | | 19:47:50 | 8 | 31.33 | | |

| App | asset-dependency | True | rda-asset-depe | 47b9eb02 | | 19:47:38 | 8 | 31.33 | | |

| App | authenticator | True | rda-identity-d | faa33e1b | | 19:47:52 | 8 | 31.33 | | |

| App | authenticator | True | rda-identity-d | 36083c36 | | 19:47:46 | 8 | 31.33 | | |

| App | cfx-app-controller | True | rda-app-contro | 5fd3c3f4 | | 19:23:09 | 8 | 31.33 | | |

| App | cfx-app-controller | True | rda-app-contro | d66e5ce8 | | 19:22:56 | 8 | 31.33 | | |

| App | cfxdimensions-app-access-manager | True | rda-access-man | ecbb535c | | 19:47:46 | 8 | 31.33 | | |

| App | cfxdimensions-app-access-manager | True | rda-access-man | 9a05db5a | | 19:47:36 | 8 | 31.33 | | |

| App | cfxdimensions-app-collaboration | True | rda-collaborat | 61b3c53b | | 19:22:18 | 8 | 31.33 | | |

| App | cfxdimensions-app-collaboration | True | rda-collaborat | 09b9474e | | 19:21:57 | 8 | 31.33 | | |

| App | cfxdimensions-app-file-browser | True | rda-file-brows | 00495640 | | 19:22:45 | 8 | 31.33 | | |

| App | cfxdimensions-app-file-browser | True | rda-file-brows | 640f0653 | | 19:22:29 | 8 | 31.33 | | |

| App | cfxdimensions-app-irm_service | True | rda-irm-servic | 27e345c5 | | 19:21:43 | 8 | 31.33 | | |

| App | cfxdimensions-app-irm_service | True | rda-irm-servic | 23c7e082 | | 19:21:56 | 8 | 31.33 | | |

| App | cfxdimensions-app-notification-service | True | rda-notificati | bbb5b08b | | 19:23:20 | 8 | 31.33 | | |

| App | cfxdimensions-app-notification-service | True | rda-notificati | 9841bcb5 | | 19:23:02 | 8 | 31.33 | | |

+-------+----------------------------------------+-------------+----------------+----------+-------------+----------+--------+--------------+---------------+--------------+

Run the below command to check if all services has ok status and does not throw any failure messages.

+-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-------------------------------------------------------------+

| Cat | Pod-Type | Host | ID | Site | Health Parameter | Status | Message |

|-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-------------------------------------------------------------|

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | service-status | ok | |

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | 192.168-connectivity | ok | |

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | service-dependency:configuration-service | ok | 2 pod(s) found for configuration-service |

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | service-initialization-status | ok | |

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | kafka-connectivity | ok | Cluster=NTc1NWU1MTQxYmY3MTFlZg, Broker=1, Brokers=[1, 2, 3] |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | service-status | ok | |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | 192.168-connectivity | ok | |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | service-dependency:configuration-service | ok | 2 pod(s) found for configuration-service |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | service-initialization-status | ok | |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | kafka-connectivity | ok | Cluster=NTc1NWU1MTQxYmY3MTFlZg, Broker=2, Brokers=[1, 2, 3] |

| rda_app | alert-processor | c6cc7b04ab33 | b4ebfb06 | | service-status | ok | |

| rda_app | alert-processor | c6cc7b04ab33 | b4ebfb06 | | 192.168-connectivity | ok | |

+-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-------------------------------------------------------------+

1.2.5 Upgrade Event Gateway Services

Note

This upgrade is only for Non-K8s

Step 1. Prerequisites

- Event Gateway with 8.1.0.1 tag should be already installed

Note

If a user deployed the event gateway using the RDAF CLI, follow Step 2 and skip Step 3 or if the user did not deploy event gateway in RDAF CLI go to Step 3

Step 2. Upgrade Event Gateway Using RDAF CLI

- To upgrade the event gateway, log in to the rdaf cli VM and execute the following command.

Step 3. Upgrade Event Gateway Using Docker Compose File

-

Login to the Event Gateway installed VM

-

Navigate to the location where Event Gateway was previously installed, using the following command

-

Edit the docker-compose file for the Event Gateway using a local editor (e.g. vi) update the tag and save it

version: '3.1' services: rda_event_gateway: image: docker1.cloudfabrix.io:443/external/ubuntu-rda-event-gateway:8.1.0.2 restart: always network_mode: host mem_limit: 6G memswap_limit: 6G volumes: - /opt/rdaf/network_config:/network_config - /opt/rdaf/event_gateway/config:/event_gw_config - /opt/rdaf/event_gateway/certs:/certs - /opt/rdaf/event_gateway/logs:/logs - /opt/rdaf/event_gateway/log_archive:/tmp/log_archive logging: driver: "json-file" options: max-size: "25m" max-file: "5" environment: RDA_NETWORK_CONFIG: /network_config/rda_network_config.json EVENT_GW_MAIN_CONFIG: /event_gw_config/main/main.yml EVENT_GW_SNMP_TRAP_CONFIG: /event_gw_config/snmptrap/trap_template.json EVENT_GW_SNMP_TRAP_ALERT_CONFIG: /event_gw_config/snmptrap/trap_to_alert_go.yaml AGENT_GROUP: event_gateway_site01 EVENT_GATEWAY_CONFIG_DIR: /event_gw_config LOGGER_CONFIG_FILE: /event_gw_config/main/logging.yml -

Please run the following commands

-

Use the command as shown below to ensure that the RDA docker instances are up and running.

-

Use the below mentioned command to check docker logs for any errors

+-------------------+---------------+---------------+--------------+---------+

| Name | Host | Status | Container Id | Tag |

+-------------------+---------------+---------------+--------------+---------+

| rda_event_gateway | 192.168.108.127 | Up 42 hours | c22b1cf6900e | 8.1.0.2 |

| rda_event_gateway | 192.168.108.128 | Up 42 hours | 36b86a7bdff3 | 8.1.0.2 |

+-------------------+---------------+---------------+--------------+---------+

1.2.6 Prune Images

After upgrading the services, run the below command to clean up the un-used docker images. This command helps to clean up and free the disk space

Note

If a random incident page is not loading, please restart the IRM pods sequentially, one at a time. Additionally, follow the steps below to update the Jinja template.

1.3 Update Jinja Template

To update the Jinja template, please follow the steps below:

1. Download the Jinja template from the following link to update incident-details-metrics:

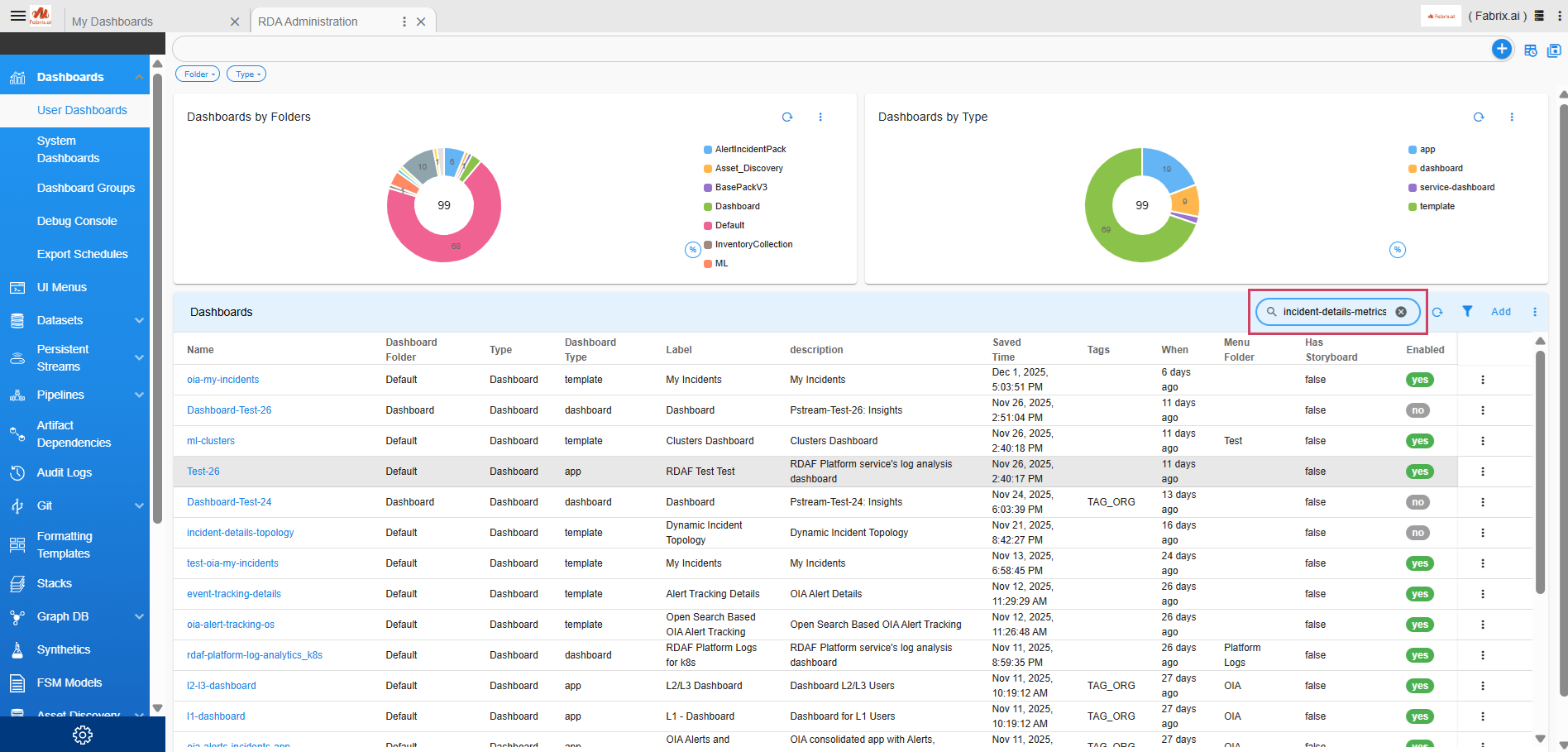

2. Navigate to Main Menu → RDA Administration → User Dashboards.

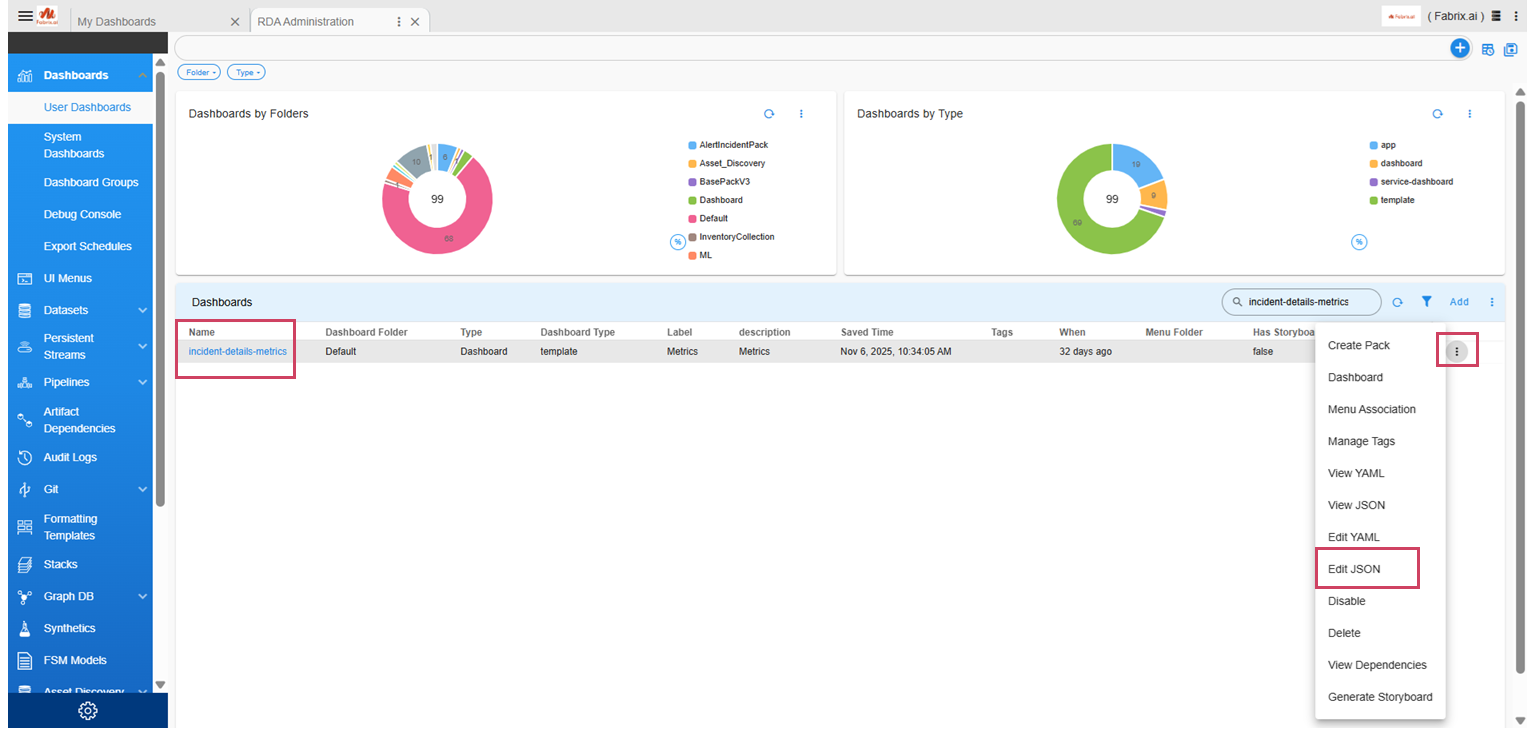

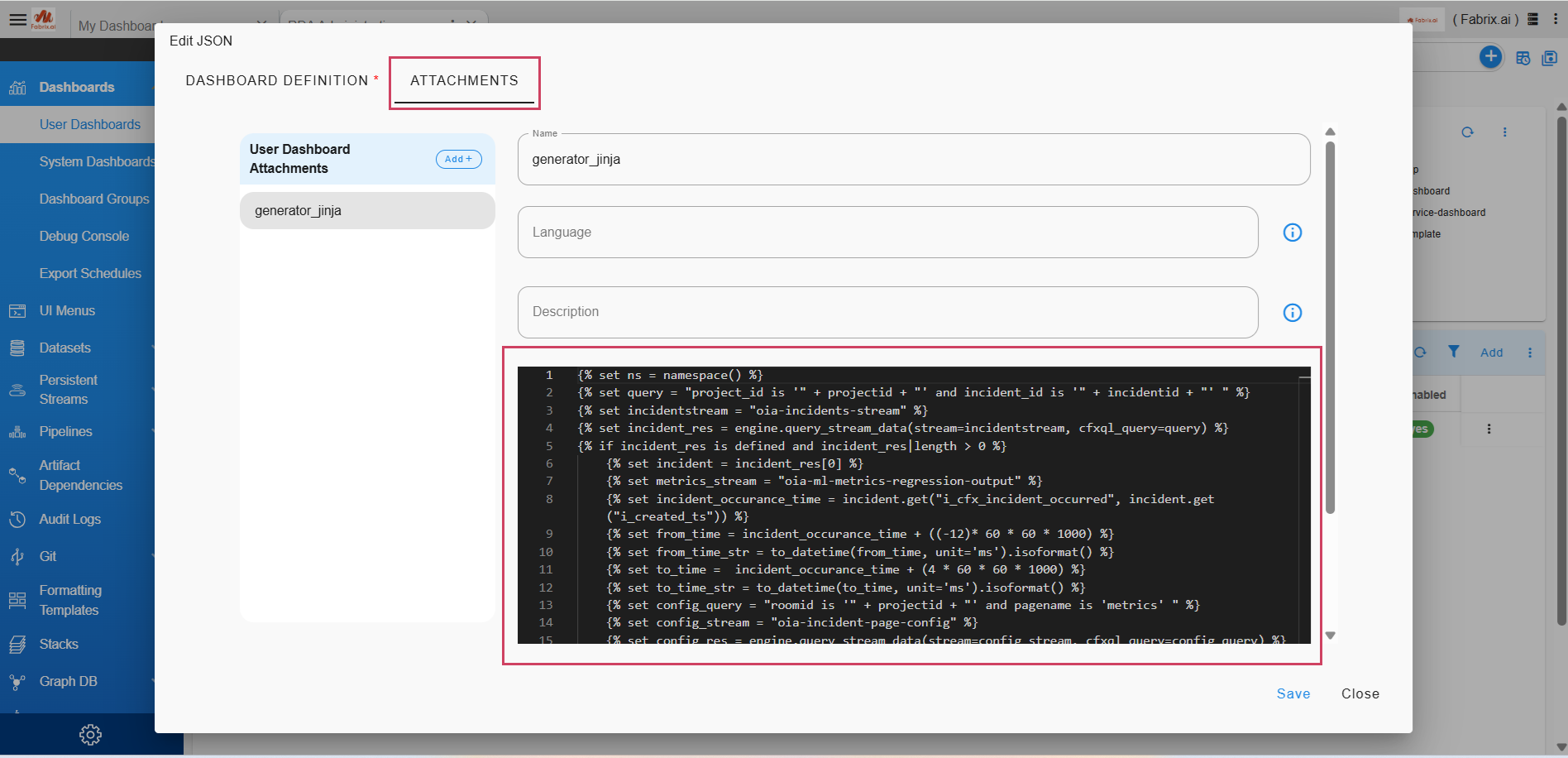

3. In the search box of the tabular report, search for incident-details-metrics. Click on the three dots to open the menu for the table row where the incident-details-metrics dashboard is listed, then click on Edit JSON. Click on Attachments and replace the existing text with the text provided in the link above.

4. Replace the downloaded Jinja template and click Save.